Generate accurate APA citations for free

- Knowledge Base

- APA Style 7th edition

- How to write an APA results section

Reporting Research Results in APA Style | Tips & Examples

Published on December 21, 2020 by Pritha Bhandari . Revised on January 17, 2024.

The results section of a quantitative research paper is where you summarize your data and report the findings of any relevant statistical analyses.

The APA manual provides rigorous guidelines for what to report in quantitative research papers in the fields of psychology, education, and other social sciences.

Use these standards to answer your research questions and report your data analyses in a complete and transparent way.

Instantly correct all language mistakes in your text

Upload your document to correct all your mistakes in minutes

Table of contents

What goes in your results section, introduce your data, summarize your data, report statistical results, presenting numbers effectively, what doesn’t belong in your results section, frequently asked questions about results in apa.

In APA style, the results section includes preliminary information about the participants and data, descriptive and inferential statistics, and the results of any exploratory analyses.

Include these in your results section:

- Participant flow and recruitment period. Report the number of participants at every stage of the study, as well as the dates when recruitment took place.

- Missing data . Identify the proportion of data that wasn’t included in your final analysis and state the reasons.

- Any adverse events. Make sure to report any unexpected events or side effects (for clinical studies).

- Descriptive statistics . Summarize the primary and secondary outcomes of the study.

- Inferential statistics , including confidence intervals and effect sizes. Address the primary and secondary research questions by reporting the detailed results of your main analyses.

- Results of subgroup or exploratory analyses, if applicable. Place detailed results in supplementary materials.

Write up the results in the past tense because you’re describing the outcomes of a completed research study.

Are your APA in-text citations flawless?

The AI-powered APA Citation Checker points out every error, tells you exactly what’s wrong, and explains how to fix it. Say goodbye to losing marks on your assignment!

Get started!

Before diving into your research findings, first describe the flow of participants at every stage of your study and whether any data were excluded from the final analysis.

Participant flow and recruitment period

It’s necessary to report any attrition, which is the decline in participants at every sequential stage of a study. That’s because an uneven number of participants across groups sometimes threatens internal validity and makes it difficult to compare groups. Be sure to also state all reasons for attrition.

If your study has multiple stages (e.g., pre-test, intervention, and post-test) and groups (e.g., experimental and control groups), a flow chart is the best way to report the number of participants in each group per stage and reasons for attrition.

Also report the dates for when you recruited participants or performed follow-up sessions.

Missing data

Another key issue is the completeness of your dataset. It’s necessary to report both the amount and reasons for data that was missing or excluded.

Data can become unusable due to equipment malfunctions, improper storage, unexpected events, participant ineligibility, and so on. For each case, state the reason why the data were unusable.

Some data points may be removed from the final analysis because they are outliers—but you must be able to justify how you decided what to exclude.

If you applied any techniques for overcoming or compensating for lost data, report those as well.

Adverse events

For clinical studies, report all events with serious consequences or any side effects that occured.

Descriptive statistics summarize your data for the reader. Present descriptive statistics for each primary, secondary, and subgroup analysis.

Don’t provide formulas or citations for commonly used statistics (e.g., standard deviation) – but do provide them for new or rare equations.

Descriptive statistics

The exact descriptive statistics that you report depends on the types of data in your study. Categorical variables can be reported using proportions, while quantitative data can be reported using means and standard deviations . For a large set of numbers, a table is the most effective presentation format.

Include sample sizes (overall and for each group) as well as appropriate measures of central tendency and variability for the outcomes in your results section. For every point estimate , add a clearly labelled measure of variability as well.

Be sure to note how you combined data to come up with variables of interest. For every variable of interest, explain how you operationalized it.

According to APA journal standards, it’s necessary to report all relevant hypothesis tests performed, estimates of effect sizes, and confidence intervals.

When reporting statistical results, you should first address primary research questions before moving onto secondary research questions and any exploratory or subgroup analyses.

Present the results of tests in the order that you performed them—report the outcomes of main tests before post-hoc tests, for example. Don’t leave out any relevant results, even if they don’t support your hypothesis.

Inferential statistics

For each statistical test performed, first restate the hypothesis , then state whether your hypothesis was supported and provide the outcomes that led you to that conclusion.

Report the following for each hypothesis test:

- the test statistic value,

- the degrees of freedom ,

- the exact p- value (unless it is less than 0.001),

- the magnitude and direction of the effect.

When reporting complex data analyses, such as factor analysis or multivariate analysis, present the models estimated in detail, and state the statistical software used. Make sure to report any violations of statistical assumptions or problems with estimation.

Effect sizes and confidence intervals

For each hypothesis test performed, you should present confidence intervals and estimates of effect sizes .

Confidence intervals are useful for showing the variability around point estimates. They should be included whenever you report population parameter estimates.

Effect sizes indicate how impactful the outcomes of a study are. But since they are estimates, it’s recommended that you also provide confidence intervals of effect sizes.

Subgroup or exploratory analyses

Briefly report the results of any other planned or exploratory analyses you performed. These may include subgroup analyses as well.

Subgroup analyses come with a high chance of false positive results, because performing a large number of comparison or correlation tests increases the chances of finding significant results.

If you find significant results in these analyses, make sure to appropriately report them as exploratory (rather than confirmatory) results to avoid overstating their importance.

While these analyses can be reported in less detail in the main text, you can provide the full analyses in supplementary materials.

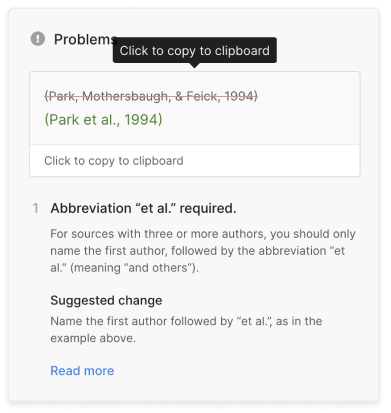

Scribbr Citation Checker New

The AI-powered Citation Checker helps you avoid common mistakes such as:

- Missing commas and periods

- Incorrect usage of “et al.”

- Ampersands (&) in narrative citations

- Missing reference entries

To effectively present numbers, use a mix of text, tables , and figures where appropriate:

- To present three or fewer numbers, try a sentence ,

- To present between 4 and 20 numbers, try a table ,

- To present more than 20 numbers, try a figure .

Since these are general guidelines, use your own judgment and feedback from others for effective presentation of numbers.

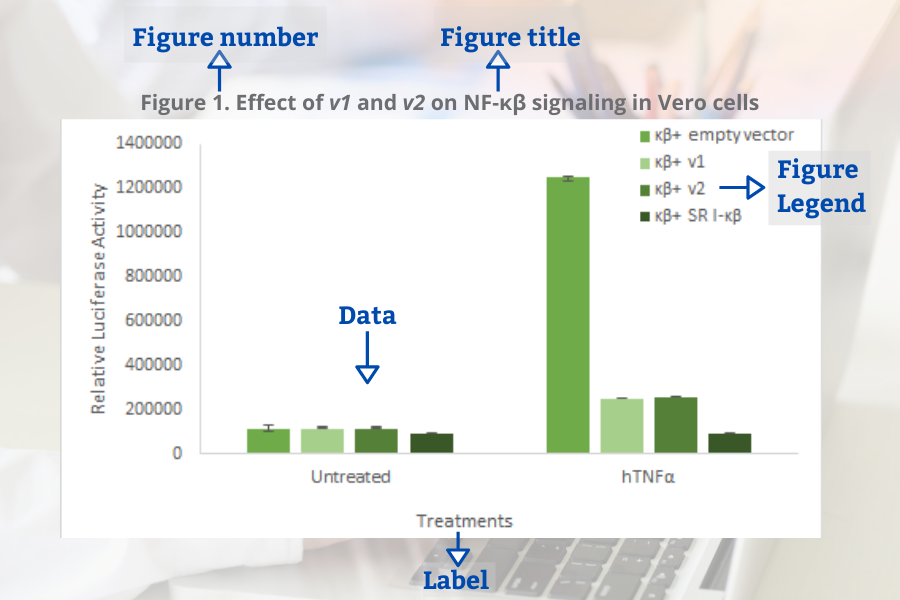

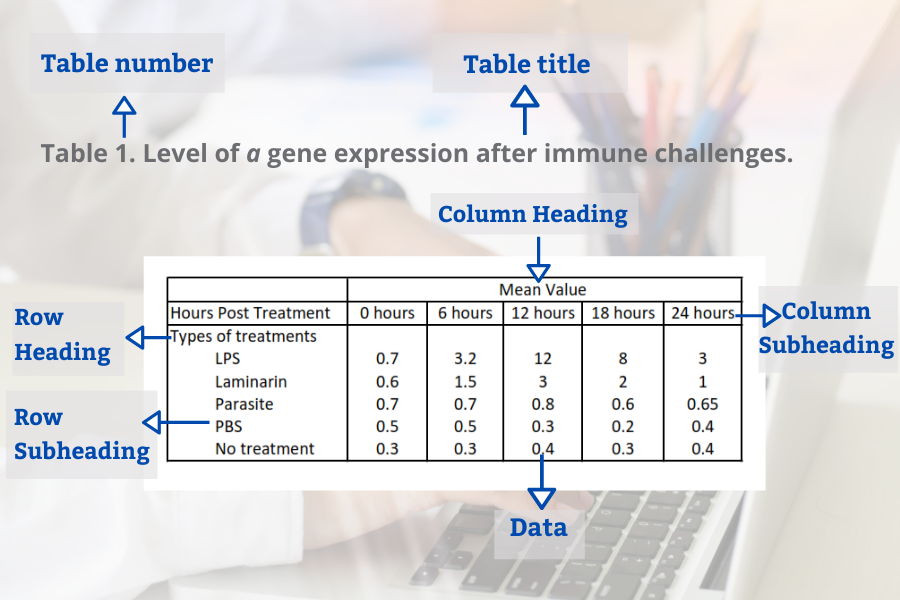

Tables and figures should be numbered and have titles, along with relevant notes. Make sure to present data only once throughout the paper and refer to any tables and figures in the text.

Formatting statistics and numbers

It’s important to follow capitalization , italicization, and abbreviation rules when referring to statistics in your paper. There are specific format guidelines for reporting statistics in APA , as well as general rules about writing numbers .

If you are unsure of how to present specific symbols, look up the detailed APA guidelines or other papers in your field.

It’s important to provide a complete picture of your data analyses and outcomes in a concise way. For that reason, raw data and any interpretations of your results are not included in the results section.

It’s rarely appropriate to include raw data in your results section. Instead, you should always save the raw data securely and make them available and accessible to any other researchers who request them.

Making scientific research available to others is a key part of academic integrity and open science.

Interpretation or discussion of results

This belongs in your discussion section. Your results section is where you objectively report all relevant findings and leave them open for interpretation by readers.

While you should state whether the findings of statistical tests lend support to your hypotheses, refrain from forming conclusions to your research questions in the results section.

Explanation of how statistics tests work

For the sake of concise writing, you can safely assume that readers of your paper have professional knowledge of how statistical inferences work.

In an APA results section , you should generally report the following:

- Participant flow and recruitment period.

- Missing data and any adverse events.

- Descriptive statistics about your samples.

- Inferential statistics , including confidence intervals and effect sizes.

- Results of any subgroup or exploratory analyses, if applicable.

According to the APA guidelines, you should report enough detail on inferential statistics so that your readers understand your analyses.

- the test statistic value

- the degrees of freedom

- the exact p value (unless it is less than 0.001)

- the magnitude and direction of the effect

You should also present confidence intervals and estimates of effect sizes where relevant.

In APA style, statistics can be presented in the main text or as tables or figures . To decide how to present numbers, you can follow APA guidelines:

- To present three or fewer numbers, try a sentence,

- To present between 4 and 20 numbers, try a table,

- To present more than 20 numbers, try a figure.

Results are usually written in the past tense , because they are describing the outcome of completed actions.

The results chapter or section simply and objectively reports what you found, without speculating on why you found these results. The discussion interprets the meaning of the results, puts them in context, and explains why they matter.

In qualitative research , results and discussion are sometimes combined. But in quantitative research , it’s considered important to separate the objective results from your interpretation of them.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Bhandari, P. (2024, January 17). Reporting Research Results in APA Style | Tips & Examples. Scribbr. Retrieved August 27, 2024, from https://www.scribbr.com/apa-style/results-section/

Is this article helpful?

Pritha Bhandari

Other students also liked, how to write an apa methods section, how to format tables and figures in apa style, reporting statistics in apa style | guidelines & examples, scribbr apa citation checker.

An innovative new tool that checks your APA citations with AI software. Say goodbye to inaccurate citations!

- Langson Library

- Science Library

- Grunigen Medical Library

- Law Library

- Connect From Off-Campus

- Accessibility

- Gateway Study Center

Email this link

Writing a scientific paper.

- Writing a lab report

- INTRODUCTION

Writing a "good" results section

Figures and Captions in Lab Reports

"Results Checklist" from: How to Write a Good Scientific Paper. Chris A. Mack. SPIE. 2018.

Additional tips for results sections.

- LITERATURE CITED

- Bibliography of guides to scientific writing and presenting

- Peer Review

- Presentations

- Lab Report Writing Guides on the Web

This is the core of the paper. Don't start the results sections with methods you left out of the Materials and Methods section. You need to give an overall description of the experiments and present the data you found.

- Factual statements supported by evidence. Short and sweet without excess words

- Present representative data rather than endlessly repetitive data

- Discuss variables only if they had an effect (positive or negative)

- Use meaningful statistics

- Avoid redundancy. If it is in the tables or captions you may not need to repeat it

A short article by Dr. Brett Couch and Dr. Deena Wassenberg, Biology Program, University of Minnesota

- Present the results of the paper, in logical order, using tables and graphs as necessary.

- Explain the results and show how they help to answer the research questions posed in the Introduction. Evidence does not explain itself; the results must be presented and then explained.

- Avoid: presenting results that are never discussed; presenting results in chronological order rather than logical order; ignoring results that do not support the conclusions;

- Number tables and figures separately beginning with 1 (i.e. Table 1, Table 2, Figure 1, etc.).

- Do not attempt to evaluate the results in this section. Report only what you found; hold all discussion of the significance of the results for the Discussion section.

- It is not necessary to describe every step of your statistical analyses. Scientists understand all about null hypotheses, rejection rules, and so forth and do not need to be reminded of them. Just say something like, "Honeybees did not use the flowers in proportion to their availability (X2 = 7.9, p<0.05, d.f.= 4, chi-square test)." Likewise, cite tables and figures without describing in detail how the data were manipulated. Explanations of this sort should appear in a legend or caption written on the same page as the figure or table.

- You must refer in the text to each figure or table you include in your paper.

- Tables generally should report summary-level data, such as means ± standard deviations, rather than all your raw data. A long list of all your individual observations will mean much less than a few concise, easy-to-read tables or figures that bring out the main findings of your study.

- Only use a figure (graph) when the data lend themselves to a good visual representation. Avoid using figures that show too many variables or trends at once, because they can be hard to understand.

From: https://writingcenter.gmu.edu/guides/imrad-results-discussion

- << Previous: METHODS

- Next: DISCUSSION >>

- Last Updated: Aug 4, 2023 9:33 AM

- URL: https://guides.lib.uci.edu/scientificwriting

Off-campus? Please use the Software VPN and choose the group UCIFull to access licensed content. For more information, please Click here

Software VPN is not available for guests, so they may not have access to some content when connecting from off-campus.

- Research Process

- Manuscript Preparation

- Manuscript Review

- Publication Process

- Publication Recognition

- Language Editing Services

- Translation Services

How to Write the Results Section: Guide to Structure and Key Points

- 4 minute read

- 76.9K views

Table of Contents

The ‘ Results’ section of a research paper, like the ‘Introduction’ and other key parts, attracts significant attention from editors, reviewers, and readers. The reason lies in its critical role — that of revealing the key findings of a study and demonstrating how your research fills a knowledge gap in your field of study. Given its importance, crafting a clear and logically structured results section is essential.

In this article, we will discuss the key elements of an effective results section and share strategies for making it concise and engaging. We hope this guide will help you quickly grasp ways of writing the results section, avoid common pitfalls, and make your writing process more efficient and effective.

Structure of the results section

Briefly restate the research topic in the introduction : Although the main purpose of the results section in a research paper is to list the notable findings of a study, it is customary to start with a brief repetition of the research question. This helps refocus the reader, allowing them to better appreciate the relevance of the findings. Additionally, restating the research question establishes a connection to the previous section of the paper, creating a smoother flow of information.

Systematically present your research findings : Address the primary research question first, followed by the secondary research questions. If your research addresses multiple questions, mention the findings related to each one individually to ensure clarity and coherence.

Represent your results visually: Graphs, tables, and other figures can help illustrate the findings of your paper, especially if there is a large amount of data in the results. As a rule of thumb, use a visual medium like a graph or a table if you wish to present three or more statistical values simultaneously.

Graphical or tabular representations of data can also make your results section more visually appealing. Remember, an appealing and well-organized results section can help peer reviewers better understand the merits of your research, thereby increasing your chances of publication.

Practical guidance for writing an effective ‘Results’ section

- Always use simple and plain language. Avoid the use of uncertain or unclear expressions.

- The findings of the study must be expressed in an objective and unbiased manner. While it is acceptable to correlate certain findings , it is best to avoid over-interpreting the results. In addition, avoid using subjective or emotional words , such as “interestingly” or “unfortunately”, to describe the results as this may cause readers to doubt the objectivity of the paper.

- The content balances simplicity with comprehensiveness . For statistical data, simply describe the relevant tests and explain their results without mentioning raw data. If the study involves multiple hypotheses, describe the results for each one separately to avoid confusion and aid understanding. To enhance credibility, e nsure that negative results , if any, are included in this section, even if they do not support the research hypothesis.

- Wherever possible, use illustrations like tables, figures, charts, or other visual representations to highlight the results of your research paper. Mention these illustrations in the text, but do not repeat the information that they convey ¹ .

Difference between data, results, and discussion sections

Data , results, and discussion sections all communicate the findings of a study, but each serves a distinct purpose with varying levels of interpretation.

In the results section , one cannot provide data without interpreting its relevance or make statements without citing data ² . In a sense, the results section does not draw connections between different data points. Therefore, there is a certain level of interpretation involved in drawing results out of data.

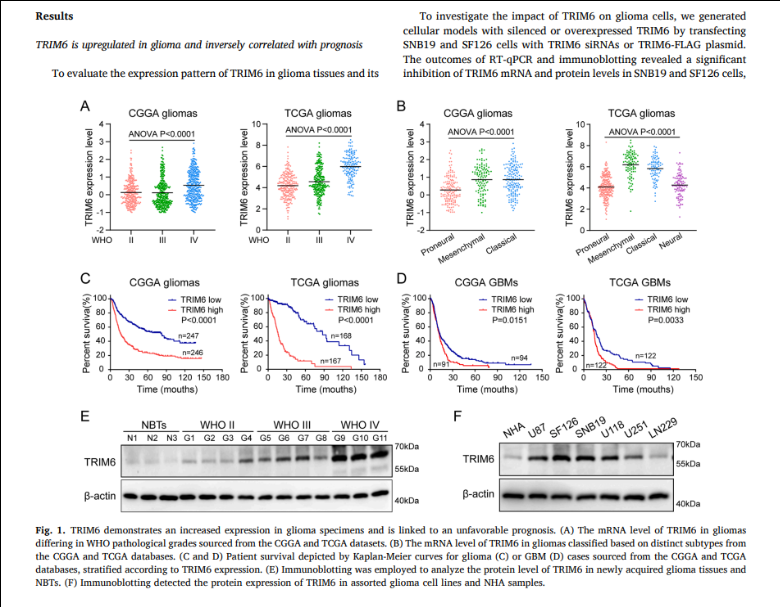

(The example is intended to showcase how the visual elements and text in the results section complement each other ³ . The academic viewpoints included in the illustrative screenshots should not be used as references.)

The discussion section allows authors even more interpretive freedom compared to the results section. Here, data and patterns within the data are compared with the findings from other studies to make more generalized points. Unlike the results section , which focuses purely on factual data, the discussion section touches upon hypothetical information, drawing conjectures and suggesting future directions for research.

The ‘ Results’ section serves as the core of a research paper, capturing readers’ attention and providing insights into the study’s essence. Regardless of the subject of your research paper, a well-written results section can generate interest in your research. By following the tips outlined here, you can create a results section that effectively communicates your finding and invites further exploration. Remember, clarity is the key, and with the right approach, your results section can guide readers through the intricacies of your research.

Professionals at Elsevier Language Services know the secret to writing a well-balanced results section. With their expert suggestions, you can ensure that your findings come across clearly to the reader. To maximize your chances of publication, reach out to Elsevier Language Services today !

Type in wordcount for Standard Total: USD EUR JPY Follow this link if your manuscript is longer than 12,000 words. Upload

Reference

- Cetin, S., & Hackam, D. J. (2005). An approach to the writing of a scientific manuscript. Journal of Surgical Research, 128(2), 165–167. https://doi.org/10.1016/j.jss.2005.07.002

- Bahadoran, Z., Mirmiran, P., Zadeh-Vakili, A., Hosseinpanah, F., & Ghasemi, A. (2019). The Principles of Biomedical Scientific Writing: Results. International Journal of Endocrinology and Metabolism/International Journal of Endocrinology and Metabolism., In Press (In Press). https://doi.org/10.5812/ijem.92113

- Guo, J., Wang, J., Zhang, P., Wen, P., Zhang, S., Dong, X., & Dong, J. (2024). TRIM6 promotes glioma malignant progression by enhancing FOXO3A ubiquitination and degradation. Translational Oncology, 46, 101999. https://doi.org/10.1016/j.tranon.2024.101999

Writing a good review article

Why is data validation important in research?

You may also like.

Submission 101: What format should be used for academic papers?

Page-Turner Articles are More Than Just Good Arguments: Be Mindful of Tone and Structure!

A Must-see for Researchers! How to Ensure Inclusivity in Your Scientific Writing

Make Hook, Line, and Sinker: The Art of Crafting Engaging Introductions

Can Describing Study Limitations Improve the Quality of Your Paper?

A Guide to Crafting Shorter, Impactful Sentences in Academic Writing

6 Steps to Write an Excellent Discussion in Your Manuscript

How to Write Clear and Crisp Civil Engineering Papers? Here are 5 Key Tips to Consider

Input your search keywords and press Enter.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

- My Bibliography

- Collections

- Citation manager

Save citation to file

Email citation, add to collections.

- Create a new collection

- Add to an existing collection

Add to My Bibliography

Your saved search, create a file for external citation management software, your rss feed.

- Search in PubMed

- Search in NLM Catalog

- Add to Search

How to Write an Effective Results Section

Affiliation.

- 1 Rothman Orthopaedics Institute, Philadelphia, PA.

- PMID: 31145152

- DOI: 10.1097/BSD.0000000000000845

Developing a well-written research paper is an important step in completing a scientific study. This paper is where the principle investigator and co-authors report the purpose, methods, findings, and conclusions of the study. A key element of writing a research paper is to clearly and objectively report the study's findings in the Results section. The Results section is where the authors inform the readers about the findings from the statistical analysis of the data collected to operationalize the study hypothesis, optimally adding novel information to the collective knowledge on the subject matter. By utilizing clear, concise, and well-organized writing techniques and visual aids in the reporting of the data, the author is able to construct a case for the research question at hand even without interpreting the data.

PubMed Disclaimer

Similar articles

- Rules to be adopted for publishing a scientific paper. Picardi N. Picardi N. Ann Ital Chir. 2016;87:1-3. Ann Ital Chir. 2016. PMID: 28474609

- How to Write Effective Discussion and Conclusion Sections. Makar G, Foltz C, Lendner M, Vaccaro AR. Makar G, et al. Clin Spine Surg. 2018 Oct;31(8):345-346. doi: 10.1097/BSD.0000000000000687. Clin Spine Surg. 2018. PMID: 29979216

- The rites of writing papers: steps to successful publishing for psychiatrists. Brakoulias V, Macfarlane MD, Looi JC. Brakoulias V, et al. Australas Psychiatry. 2015 Feb;23(1):32-6. doi: 10.1177/1039856214560180. Epub 2014 Dec 2. Australas Psychiatry. 2015. PMID: 25469001

- How to write a research paper. Alexandrov AV. Alexandrov AV. Cerebrovasc Dis. 2004;18(2):135-8. doi: 10.1159/000079266. Epub 2004 Jun 23. Cerebrovasc Dis. 2004. PMID: 15218279 Review.

- How to write an original radiological research manuscript. Bannas P, Reeder SB. Bannas P, et al. Eur Radiol. 2017 Nov;27(11):4455-4460. doi: 10.1007/s00330-017-4879-8. Epub 2017 Jun 14. Eur Radiol. 2017. PMID: 28616726 Free PMC article. Review.

- Essential Guide to Manuscript Writing for Academic Dummies: An Editor's Perspective. Aga SS, Nissar S. Aga SS, et al. Biochem Res Int. 2022 Sep 1;2022:1492058. doi: 10.1155/2022/1492058. eCollection 2022. Biochem Res Int. 2022. PMID: 36092536 Free PMC article. Review.

- Search in MeSH

LinkOut - more resources

Full text sources.

- Ovid Technologies, Inc.

- Wolters Kluwer

- Citation Manager

NCBI Literature Resources

MeSH PMC Bookshelf Disclaimer

The PubMed wordmark and PubMed logo are registered trademarks of the U.S. Department of Health and Human Services (HHS). Unauthorized use of these marks is strictly prohibited.

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

Chapter 11: Presenting Your Research

Writing a Research Report in American Psychological Association (APA) Style

Learning Objectives

- Identify the major sections of an APA-style research report and the basic contents of each section.

- Plan and write an effective APA-style research report.

In this section, we look at how to write an APA-style empirical research report , an article that presents the results of one or more new studies. Recall that the standard sections of an empirical research report provide a kind of outline. Here we consider each of these sections in detail, including what information it contains, how that information is formatted and organized, and tips for writing each section. At the end of this section is a sample APA-style research report that illustrates many of these principles.

Sections of a Research Report

Title page and abstract.

An APA-style research report begins with a title page . The title is centred in the upper half of the page, with each important word capitalized. The title should clearly and concisely (in about 12 words or fewer) communicate the primary variables and research questions. This sometimes requires a main title followed by a subtitle that elaborates on the main title, in which case the main title and subtitle are separated by a colon. Here are some titles from recent issues of professional journals published by the American Psychological Association.

- Sex Differences in Coping Styles and Implications for Depressed Mood

- Effects of Aging and Divided Attention on Memory for Items and Their Contexts

- Computer-Assisted Cognitive Behavioural Therapy for Child Anxiety: Results of a Randomized Clinical Trial

- Virtual Driving and Risk Taking: Do Racing Games Increase Risk-Taking Cognitions, Affect, and Behaviour?

Below the title are the authors’ names and, on the next line, their institutional affiliation—the university or other institution where the authors worked when they conducted the research. As we have already seen, the authors are listed in an order that reflects their contribution to the research. When multiple authors have made equal contributions to the research, they often list their names alphabetically or in a randomly determined order.

In some areas of psychology, the titles of many empirical research reports are informal in a way that is perhaps best described as “cute.” They usually take the form of a play on words or a well-known expression that relates to the topic under study. Here are some examples from recent issues of the Journal Psychological Science .

- “Smells Like Clean Spirit: Nonconscious Effects of Scent on Cognition and Behavior”

- “Time Crawls: The Temporal Resolution of Infants’ Visual Attention”

- “Scent of a Woman: Men’s Testosterone Responses to Olfactory Ovulation Cues”

- “Apocalypse Soon?: Dire Messages Reduce Belief in Global Warming by Contradicting Just-World Beliefs”

- “Serial vs. Parallel Processing: Sometimes They Look Like Tweedledum and Tweedledee but They Can (and Should) Be Distinguished”

- “How Do I Love Thee? Let Me Count the Words: The Social Effects of Expressive Writing”

Individual researchers differ quite a bit in their preference for such titles. Some use them regularly, while others never use them. What might be some of the pros and cons of using cute article titles?

For articles that are being submitted for publication, the title page also includes an author note that lists the authors’ full institutional affiliations, any acknowledgments the authors wish to make to agencies that funded the research or to colleagues who commented on it, and contact information for the authors. For student papers that are not being submitted for publication—including theses—author notes are generally not necessary.

The abstract is a summary of the study. It is the second page of the manuscript and is headed with the word Abstract . The first line is not indented. The abstract presents the research question, a summary of the method, the basic results, and the most important conclusions. Because the abstract is usually limited to about 200 words, it can be a challenge to write a good one.

Introduction

The introduction begins on the third page of the manuscript. The heading at the top of this page is the full title of the manuscript, with each important word capitalized as on the title page. The introduction includes three distinct subsections, although these are typically not identified by separate headings. The opening introduces the research question and explains why it is interesting, the literature review discusses relevant previous research, and the closing restates the research question and comments on the method used to answer it.

The Opening

The opening , which is usually a paragraph or two in length, introduces the research question and explains why it is interesting. To capture the reader’s attention, researcher Daryl Bem recommends starting with general observations about the topic under study, expressed in ordinary language (not technical jargon)—observations that are about people and their behaviour (not about researchers or their research; Bem, 2003 [1] ). Concrete examples are often very useful here. According to Bem, this would be a poor way to begin a research report:

Festinger’s theory of cognitive dissonance received a great deal of attention during the latter part of the 20th century (p. 191)

The following would be much better:

The individual who holds two beliefs that are inconsistent with one another may feel uncomfortable. For example, the person who knows that he or she enjoys smoking but believes it to be unhealthy may experience discomfort arising from the inconsistency or disharmony between these two thoughts or cognitions. This feeling of discomfort was called cognitive dissonance by social psychologist Leon Festinger (1957), who suggested that individuals will be motivated to remove this dissonance in whatever way they can (p. 191).

After capturing the reader’s attention, the opening should go on to introduce the research question and explain why it is interesting. Will the answer fill a gap in the literature? Will it provide a test of an important theory? Does it have practical implications? Giving readers a clear sense of what the research is about and why they should care about it will motivate them to continue reading the literature review—and will help them make sense of it.

Breaking the Rules

Researcher Larry Jacoby reported several studies showing that a word that people see or hear repeatedly can seem more familiar even when they do not recall the repetitions—and that this tendency is especially pronounced among older adults. He opened his article with the following humourous anecdote:

A friend whose mother is suffering symptoms of Alzheimer’s disease (AD) tells the story of taking her mother to visit a nursing home, preliminary to her mother’s moving there. During an orientation meeting at the nursing home, the rules and regulations were explained, one of which regarded the dining room. The dining room was described as similar to a fine restaurant except that tipping was not required. The absence of tipping was a central theme in the orientation lecture, mentioned frequently to emphasize the quality of care along with the advantages of having paid in advance. At the end of the meeting, the friend’s mother was asked whether she had any questions. She replied that she only had one question: “Should I tip?” (Jacoby, 1999, p. 3)

Although both humour and personal anecdotes are generally discouraged in APA-style writing, this example is a highly effective way to start because it both engages the reader and provides an excellent real-world example of the topic under study.

The Literature Review

Immediately after the opening comes the literature review , which describes relevant previous research on the topic and can be anywhere from several paragraphs to several pages in length. However, the literature review is not simply a list of past studies. Instead, it constitutes a kind of argument for why the research question is worth addressing. By the end of the literature review, readers should be convinced that the research question makes sense and that the present study is a logical next step in the ongoing research process.

Like any effective argument, the literature review must have some kind of structure. For example, it might begin by describing a phenomenon in a general way along with several studies that demonstrate it, then describing two or more competing theories of the phenomenon, and finally presenting a hypothesis to test one or more of the theories. Or it might describe one phenomenon, then describe another phenomenon that seems inconsistent with the first one, then propose a theory that resolves the inconsistency, and finally present a hypothesis to test that theory. In applied research, it might describe a phenomenon or theory, then describe how that phenomenon or theory applies to some important real-world situation, and finally suggest a way to test whether it does, in fact, apply to that situation.

Looking at the literature review in this way emphasizes a few things. First, it is extremely important to start with an outline of the main points that you want to make, organized in the order that you want to make them. The basic structure of your argument, then, should be apparent from the outline itself. Second, it is important to emphasize the structure of your argument in your writing. One way to do this is to begin the literature review by summarizing your argument even before you begin to make it. “In this article, I will describe two apparently contradictory phenomena, present a new theory that has the potential to resolve the apparent contradiction, and finally present a novel hypothesis to test the theory.” Another way is to open each paragraph with a sentence that summarizes the main point of the paragraph and links it to the preceding points. These opening sentences provide the “transitions” that many beginning researchers have difficulty with. Instead of beginning a paragraph by launching into a description of a previous study, such as “Williams (2004) found that…,” it is better to start by indicating something about why you are describing this particular study. Here are some simple examples:

Another example of this phenomenon comes from the work of Williams (2004).

Williams (2004) offers one explanation of this phenomenon.

An alternative perspective has been provided by Williams (2004).

We used a method based on the one used by Williams (2004).

Finally, remember that your goal is to construct an argument for why your research question is interesting and worth addressing—not necessarily why your favourite answer to it is correct. In other words, your literature review must be balanced. If you want to emphasize the generality of a phenomenon, then of course you should discuss various studies that have demonstrated it. However, if there are other studies that have failed to demonstrate it, you should discuss them too. Or if you are proposing a new theory, then of course you should discuss findings that are consistent with that theory. However, if there are other findings that are inconsistent with it, again, you should discuss them too. It is acceptable to argue that the balance of the research supports the existence of a phenomenon or is consistent with a theory (and that is usually the best that researchers in psychology can hope for), but it is not acceptable to ignore contradictory evidence. Besides, a large part of what makes a research question interesting is uncertainty about its answer.

The Closing

The closing of the introduction—typically the final paragraph or two—usually includes two important elements. The first is a clear statement of the main research question or hypothesis. This statement tends to be more formal and precise than in the opening and is often expressed in terms of operational definitions of the key variables. The second is a brief overview of the method and some comment on its appropriateness. Here, for example, is how Darley and Latané (1968) [2] concluded the introduction to their classic article on the bystander effect:

These considerations lead to the hypothesis that the more bystanders to an emergency, the less likely, or the more slowly, any one bystander will intervene to provide aid. To test this proposition it would be necessary to create a situation in which a realistic “emergency” could plausibly occur. Each subject should also be blocked from communicating with others to prevent his getting information about their behaviour during the emergency. Finally, the experimental situation should allow for the assessment of the speed and frequency of the subjects’ reaction to the emergency. The experiment reported below attempted to fulfill these conditions. (p. 378)

Thus the introduction leads smoothly into the next major section of the article—the method section.

The method section is where you describe how you conducted your study. An important principle for writing a method section is that it should be clear and detailed enough that other researchers could replicate the study by following your “recipe.” This means that it must describe all the important elements of the study—basic demographic characteristics of the participants, how they were recruited, whether they were randomly assigned, how the variables were manipulated or measured, how counterbalancing was accomplished, and so on. At the same time, it should avoid irrelevant details such as the fact that the study was conducted in Classroom 37B of the Industrial Technology Building or that the questionnaire was double-sided and completed using pencils.

The method section begins immediately after the introduction ends with the heading “Method” (not “Methods”) centred on the page. Immediately after this is the subheading “Participants,” left justified and in italics. The participants subsection indicates how many participants there were, the number of women and men, some indication of their age, other demographics that may be relevant to the study, and how they were recruited, including any incentives given for participation.

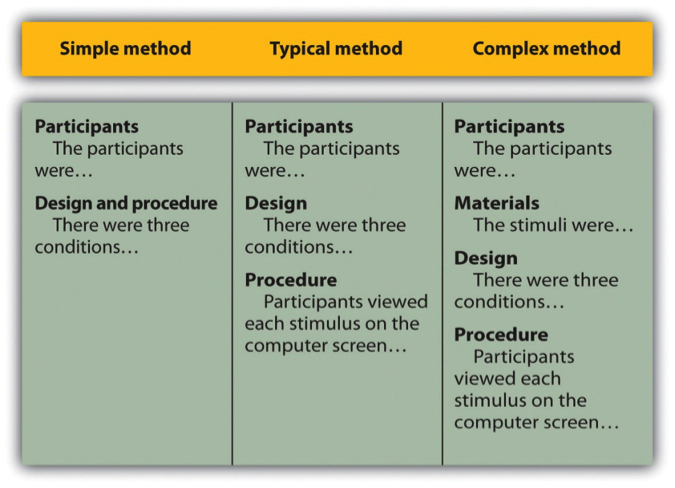

After the participants section, the structure can vary a bit. Figure 11.1 shows three common approaches. In the first, the participants section is followed by a design and procedure subsection, which describes the rest of the method. This works well for methods that are relatively simple and can be described adequately in a few paragraphs. In the second approach, the participants section is followed by separate design and procedure subsections. This works well when both the design and the procedure are relatively complicated and each requires multiple paragraphs.

What is the difference between design and procedure? The design of a study is its overall structure. What were the independent and dependent variables? Was the independent variable manipulated, and if so, was it manipulated between or within subjects? How were the variables operationally defined? The procedure is how the study was carried out. It often works well to describe the procedure in terms of what the participants did rather than what the researchers did. For example, the participants gave their informed consent, read a set of instructions, completed a block of four practice trials, completed a block of 20 test trials, completed two questionnaires, and were debriefed and excused.

In the third basic way to organize a method section, the participants subsection is followed by a materials subsection before the design and procedure subsections. This works well when there are complicated materials to describe. This might mean multiple questionnaires, written vignettes that participants read and respond to, perceptual stimuli, and so on. The heading of this subsection can be modified to reflect its content. Instead of “Materials,” it can be “Questionnaires,” “Stimuli,” and so on.

The results section is where you present the main results of the study, including the results of the statistical analyses. Although it does not include the raw data—individual participants’ responses or scores—researchers should save their raw data and make them available to other researchers who request them. Several journals now encourage the open sharing of raw data online.

Although there are no standard subsections, it is still important for the results section to be logically organized. Typically it begins with certain preliminary issues. One is whether any participants or responses were excluded from the analyses and why. The rationale for excluding data should be described clearly so that other researchers can decide whether it is appropriate. A second preliminary issue is how multiple responses were combined to produce the primary variables in the analyses. For example, if participants rated the attractiveness of 20 stimulus people, you might have to explain that you began by computing the mean attractiveness rating for each participant. Or if they recalled as many items as they could from study list of 20 words, did you count the number correctly recalled, compute the percentage correctly recalled, or perhaps compute the number correct minus the number incorrect? A third preliminary issue is the reliability of the measures. This is where you would present test-retest correlations, Cronbach’s α, or other statistics to show that the measures are consistent across time and across items. A final preliminary issue is whether the manipulation was successful. This is where you would report the results of any manipulation checks.

The results section should then tackle the primary research questions, one at a time. Again, there should be a clear organization. One approach would be to answer the most general questions and then proceed to answer more specific ones. Another would be to answer the main question first and then to answer secondary ones. Regardless, Bem (2003) [3] suggests the following basic structure for discussing each new result:

- Remind the reader of the research question.

- Give the answer to the research question in words.

- Present the relevant statistics.

- Qualify the answer if necessary.

- Summarize the result.

Notice that only Step 3 necessarily involves numbers. The rest of the steps involve presenting the research question and the answer to it in words. In fact, the basic results should be clear even to a reader who skips over the numbers.

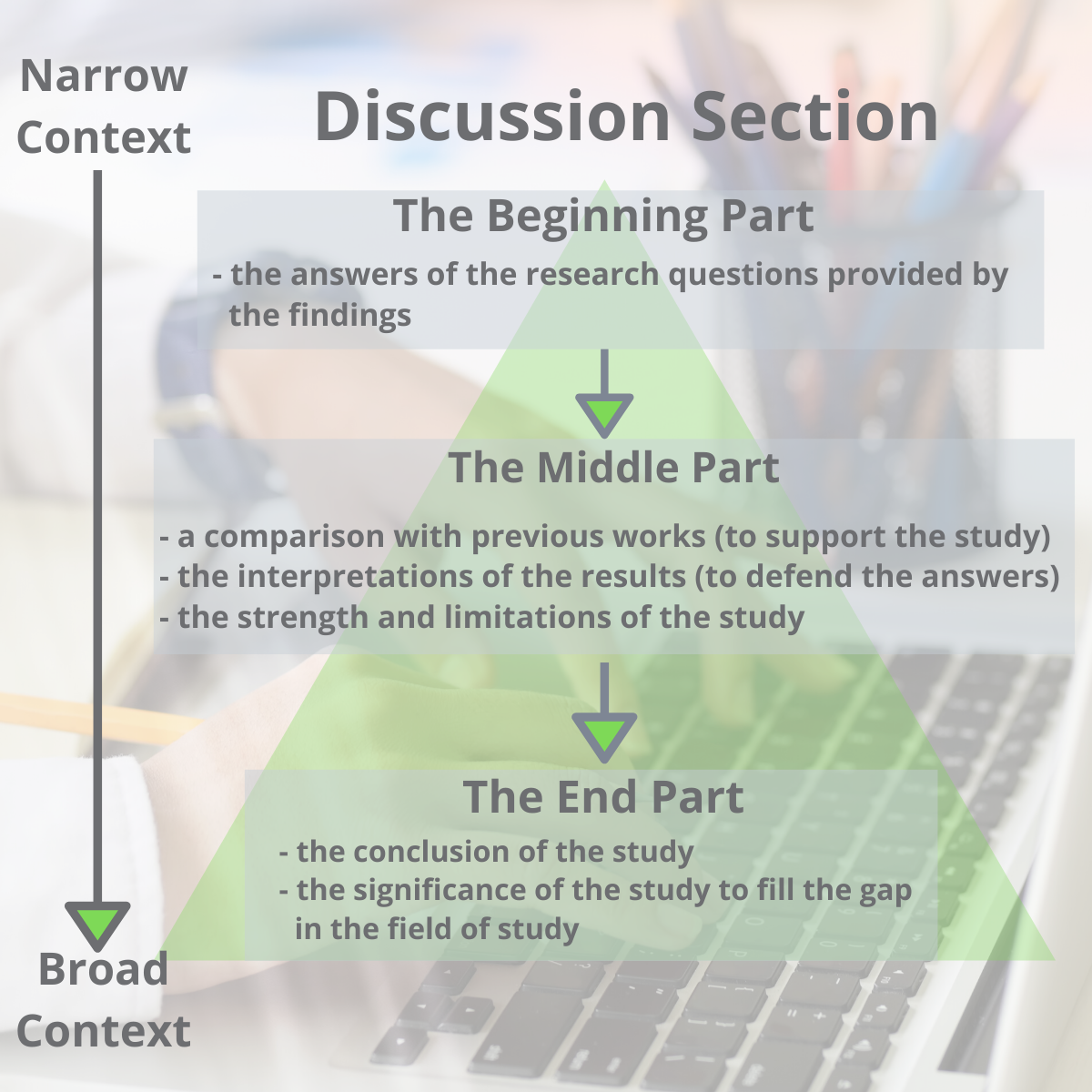

The discussion is the last major section of the research report. Discussions usually consist of some combination of the following elements:

- Summary of the research

- Theoretical implications

- Practical implications

- Limitations

- Suggestions for future research

The discussion typically begins with a summary of the study that provides a clear answer to the research question. In a short report with a single study, this might require no more than a sentence. In a longer report with multiple studies, it might require a paragraph or even two. The summary is often followed by a discussion of the theoretical implications of the research. Do the results provide support for any existing theories? If not, how can they be explained? Although you do not have to provide a definitive explanation or detailed theory for your results, you at least need to outline one or more possible explanations. In applied research—and often in basic research—there is also some discussion of the practical implications of the research. How can the results be used, and by whom, to accomplish some real-world goal?

The theoretical and practical implications are often followed by a discussion of the study’s limitations. Perhaps there are problems with its internal or external validity. Perhaps the manipulation was not very effective or the measures not very reliable. Perhaps there is some evidence that participants did not fully understand their task or that they were suspicious of the intent of the researchers. Now is the time to discuss these issues and how they might have affected the results. But do not overdo it. All studies have limitations, and most readers will understand that a different sample or different measures might have produced different results. Unless there is good reason to think they would have, however, there is no reason to mention these routine issues. Instead, pick two or three limitations that seem like they could have influenced the results, explain how they could have influenced the results, and suggest ways to deal with them.

Most discussions end with some suggestions for future research. If the study did not satisfactorily answer the original research question, what will it take to do so? What new research questions has the study raised? This part of the discussion, however, is not just a list of new questions. It is a discussion of two or three of the most important unresolved issues. This means identifying and clarifying each question, suggesting some alternative answers, and even suggesting ways they could be studied.

Finally, some researchers are quite good at ending their articles with a sweeping or thought-provoking conclusion. Darley and Latané (1968) [4] , for example, ended their article on the bystander effect by discussing the idea that whether people help others may depend more on the situation than on their personalities. Their final sentence is, “If people understand the situational forces that can make them hesitate to intervene, they may better overcome them” (p. 383). However, this kind of ending can be difficult to pull off. It can sound overreaching or just banal and end up detracting from the overall impact of the article. It is often better simply to end when you have made your final point (although you should avoid ending on a limitation).

The references section begins on a new page with the heading “References” centred at the top of the page. All references cited in the text are then listed in the format presented earlier. They are listed alphabetically by the last name of the first author. If two sources have the same first author, they are listed alphabetically by the last name of the second author. If all the authors are the same, then they are listed chronologically by the year of publication. Everything in the reference list is double-spaced both within and between references.

Appendices, Tables, and Figures

Appendices, tables, and figures come after the references. An appendix is appropriate for supplemental material that would interrupt the flow of the research report if it were presented within any of the major sections. An appendix could be used to present lists of stimulus words, questionnaire items, detailed descriptions of special equipment or unusual statistical analyses, or references to the studies that are included in a meta-analysis. Each appendix begins on a new page. If there is only one, the heading is “Appendix,” centred at the top of the page. If there is more than one, the headings are “Appendix A,” “Appendix B,” and so on, and they appear in the order they were first mentioned in the text of the report.

After any appendices come tables and then figures. Tables and figures are both used to present results. Figures can also be used to illustrate theories (e.g., in the form of a flowchart), display stimuli, outline procedures, and present many other kinds of information. Each table and figure appears on its own page. Tables are numbered in the order that they are first mentioned in the text (“Table 1,” “Table 2,” and so on). Figures are numbered the same way (“Figure 1,” “Figure 2,” and so on). A brief explanatory title, with the important words capitalized, appears above each table. Each figure is given a brief explanatory caption, where (aside from proper nouns or names) only the first word of each sentence is capitalized. More details on preparing APA-style tables and figures are presented later in the book.

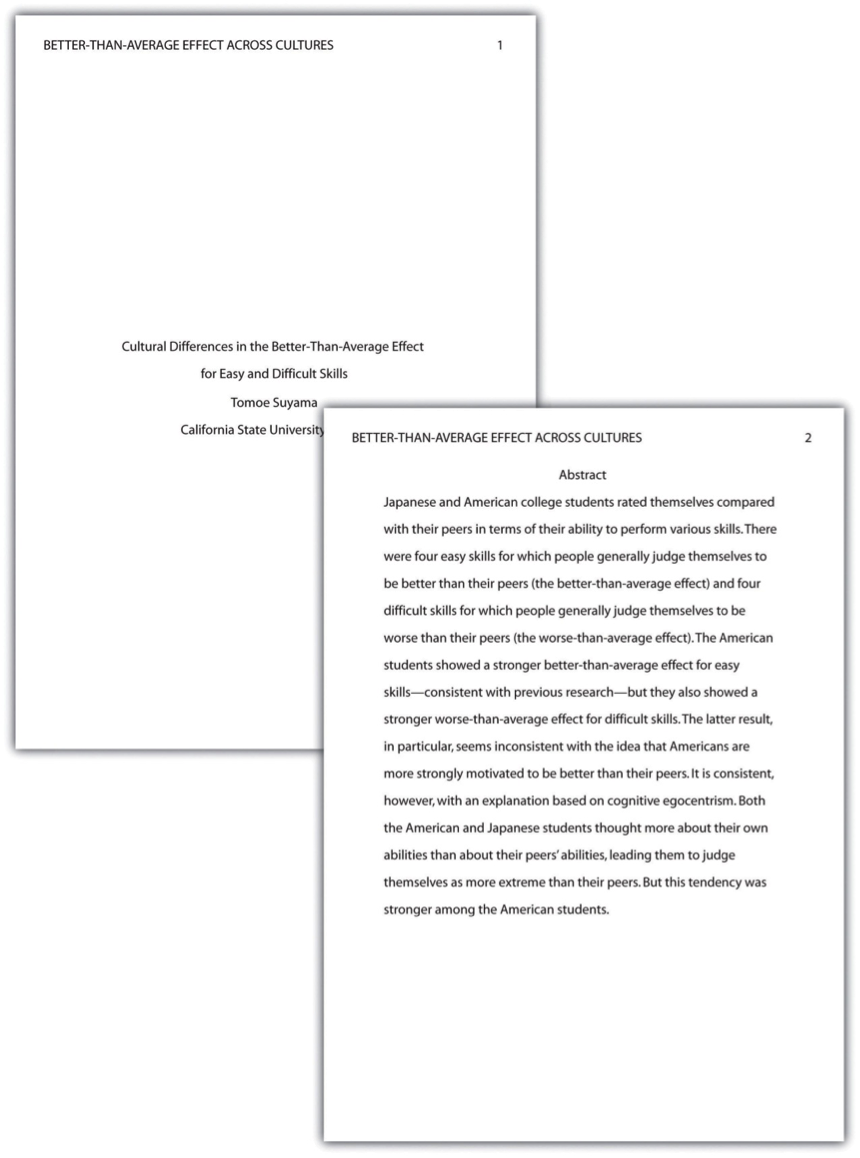

Sample APA-Style Research Report

Figures 11.2, 11.3, 11.4, and 11.5 show some sample pages from an APA-style empirical research report originally written by undergraduate student Tomoe Suyama at California State University, Fresno. The main purpose of these figures is to illustrate the basic organization and formatting of an APA-style empirical research report, although many high-level and low-level style conventions can be seen here too.

Key Takeaways

- An APA-style empirical research report consists of several standard sections. The main ones are the abstract, introduction, method, results, discussion, and references.

- The introduction consists of an opening that presents the research question, a literature review that describes previous research on the topic, and a closing that restates the research question and comments on the method. The literature review constitutes an argument for why the current study is worth doing.

- The method section describes the method in enough detail that another researcher could replicate the study. At a minimum, it consists of a participants subsection and a design and procedure subsection.

- The results section describes the results in an organized fashion. Each primary result is presented in terms of statistical results but also explained in words.

- The discussion typically summarizes the study, discusses theoretical and practical implications and limitations of the study, and offers suggestions for further research.

- Practice: Look through an issue of a general interest professional journal (e.g., Psychological Science ). Read the opening of the first five articles and rate the effectiveness of each one from 1 ( very ineffective ) to 5 ( very effective ). Write a sentence or two explaining each rating.

- Practice: Find a recent article in a professional journal and identify where the opening, literature review, and closing of the introduction begin and end.

- Practice: Find a recent article in a professional journal and highlight in a different colour each of the following elements in the discussion: summary, theoretical implications, practical implications, limitations, and suggestions for future research.

Long Descriptions

Figure 11.1 long description: Table showing three ways of organizing an APA-style method section.

In the simple method, there are two subheadings: “Participants” (which might begin “The participants were…”) and “Design and procedure” (which might begin “There were three conditions…”).

In the typical method, there are three subheadings: “Participants” (“The participants were…”), “Design” (“There were three conditions…”), and “Procedure” (“Participants viewed each stimulus on the computer screen…”).

In the complex method, there are four subheadings: “Participants” (“The participants were…”), “Materials” (“The stimuli were…”), “Design” (“There were three conditions…”), and “Procedure” (“Participants viewed each stimulus on the computer screen…”). [Return to Figure 11.1]

- Bem, D. J. (2003). Writing the empirical journal article. In J. M. Darley, M. P. Zanna, & H. R. Roediger III (Eds.), The compleat academic: A practical guide for the beginning social scientist (2nd ed.). Washington, DC: American Psychological Association. ↵

- Darley, J. M., & Latané, B. (1968). Bystander intervention in emergencies: Diffusion of responsibility. Journal of Personality and Social Psychology, 4 , 377–383. ↵

A type of research article which describes one or more new empirical studies conducted by the authors.

The page at the beginning of an APA-style research report containing the title of the article, the authors’ names, and their institutional affiliation.

A summary of a research study.

The third page of a manuscript containing the research question, the literature review, and comments about how to answer the research question.

An introduction to the research question and explanation for why this question is interesting.

A description of relevant previous research on the topic being discusses and an argument for why the research is worth addressing.

The end of the introduction, where the research question is reiterated and the method is commented upon.

The section of a research report where the method used to conduct the study is described.

The main results of the study, including the results from statistical analyses, are presented in a research article.

Section of a research report that summarizes the study's results and interprets them by referring back to the study's theoretical background.

Part of a research report which contains supplemental material.

Research Methods in Psychology - 2nd Canadian Edition Copyright © 2015 by Paul C. Price, Rajiv Jhangiani, & I-Chant A. Chiang is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

Share This Book

Guide to Writing the Results and Discussion Sections of a Scientific Article

A quality research paper has both the qualities of in-depth research and good writing ( Bordage, 2001 ). In addition, a research paper must be clear, concise, and effective when presenting the information in an organized structure with a logical manner ( Sandercock, 2013 ).

In this article, we will take a closer look at the results and discussion section. Composing each of these carefully with sufficient data and well-constructed arguments can help improve your paper overall.

The results section of your research paper contains a description about the main findings of your research, whereas the discussion section interprets the results for readers and provides the significance of the findings. The discussion should not repeat the results.

Let’s dive in a little deeper about how to properly, and clearly organize each part.

How to Organize the Results Section

Since your results follow your methods, you’ll want to provide information about what you discovered from the methods you used, such as your research data. In other words, what were the outcomes of the methods you used?

You may also include information about the measurement of your data, variables, treatments, and statistical analyses.

To start, organize your research data based on how important those are in relation to your research questions. This section should focus on showing major results that support or reject your research hypothesis. Include your least important data as supplemental materials when submitting to the journal.

The next step is to prioritize your research data based on importance – focusing heavily on the information that directly relates to your research questions using the subheadings.

The organization of the subheadings for the results section usually mirrors the methods section. It should follow a logical and chronological order.

Subheading organization

Subheadings within your results section are primarily going to detail major findings within each important experiment. And the first paragraph of your results section should be dedicated to your main findings (findings that answer your overall research question and lead to your conclusion) (Hofmann, 2013).

In the book “Writing in the Biological Sciences,” author Angelika Hofmann recommends you structure your results subsection paragraphs as follows:

- Experimental purpose

- Interpretation

Each subheading may contain a combination of ( Bahadoran, 2019 ; Hofmann, 2013, pg. 62-63):

- Text: to explain about the research data

- Figures: to display the research data and to show trends or relationships, for examples using graphs or gel pictures.

- Tables: to represent a large data and exact value

Decide on the best way to present your data — in the form of text, figures or tables (Hofmann, 2013).

Data or Results?

Sometimes we get confused about how to differentiate between data and results . Data are information (facts or numbers) that you collected from your research ( Bahadoran, 2019 ).

Whereas, results are the texts presenting the meaning of your research data ( Bahadoran, 2019 ).

One mistake that some authors often make is to use text to direct the reader to find a specific table or figure without further explanation. This can confuse readers when they interpret data completely different from what the authors had in mind. So, you should briefly explain your data to make your information clear for the readers.

Common Elements in Figures and Tables

Figures and tables present information about your research data visually. The use of these visual elements is necessary so readers can summarize, compare, and interpret large data at a glance. You can use graphs or figures to compare groups or patterns. Whereas, tables are ideal to present large quantities of data and exact values.

Several components are needed to create your figures and tables. These elements are important to sort your data based on groups (or treatments). It will be easier for the readers to see the similarities and differences among the groups.

When presenting your research data in the form of figures and tables, organize your data based on the steps of the research leading you into a conclusion.

Common elements of the figures (Bahadoran, 2019):

- Figure number

- Figure title

- Figure legend (for example a brief title, experimental/statistical information, or definition of symbols).

Tables in the result section may contain several elements (Bahadoran, 2019):

- Table number

- Table title

- Row headings (for example groups)

- Column headings

- Row subheadings (for example categories or groups)

- Column subheadings (for example categories or variables)

- Footnotes (for example statistical analyses)

Tips to Write the Results Section

- Direct the reader to the research data and explain the meaning of the data.

- Avoid using a repetitive sentence structure to explain a new set of data.

- Write and highlight important findings in your results.

- Use the same order as the subheadings of the methods section.

- Match the results with the research questions from the introduction. Your results should answer your research questions.

- Be sure to mention the figures and tables in the body of your text.

- Make sure there is no mismatch between the table number or the figure number in text and in figure/tables.

- Only present data that support the significance of your study. You can provide additional data in tables and figures as supplementary material.

How to Organize the Discussion Section

It’s not enough to use figures and tables in your results section to convince your readers about the importance of your findings. You need to support your results section by providing more explanation in the discussion section about what you found.

In the discussion section, based on your findings, you defend the answers to your research questions and create arguments to support your conclusions.

Below is a list of questions to guide you when organizing the structure of your discussion section ( Viera et al ., 2018 ):

- What experiments did you conduct and what were the results?

- What do the results mean?

- What were the important results from your study?

- How did the results answer your research questions?

- Did your results support your hypothesis or reject your hypothesis?

- What are the variables or factors that might affect your results?

- What were the strengths and limitations of your study?

- What other published works support your findings?

- What other published works contradict your findings?

- What possible factors might cause your findings different from other findings?

- What is the significance of your research?

- What are new research questions to explore based on your findings?

Organizing the Discussion Section

The structure of the discussion section may be different from one paper to another, but it commonly has a beginning, middle-, and end- to the section.

One way to organize the structure of the discussion section is by dividing it into three parts (Ghasemi, 2019):

- The beginning: The first sentence of the first paragraph should state the importance and the new findings of your research. The first paragraph may also include answers to your research questions mentioned in your introduction section.

- The middle: The middle should contain the interpretations of the results to defend your answers, the strength of the study, the limitations of the study, and an update literature review that validates your findings.

- The end: The end concludes the study and the significance of your research.

Another possible way to organize the discussion section was proposed by Michael Docherty in British Medical Journal: is by using this structure ( Docherty, 1999 ):

- Discussion of important findings

- Comparison of your results with other published works

- Include the strengths and limitations of the study

- Conclusion and possible implications of your study, including the significance of your study – address why and how is it meaningful

- Future research questions based on your findings

Finally, a last option is structuring your discussion this way (Hofmann, 2013, pg. 104):

- First Paragraph: Provide an interpretation based on your key findings. Then support your interpretation with evidence.

- Secondary results

- Limitations

- Unexpected findings

- Comparisons to previous publications

- Last Paragraph: The last paragraph should provide a summarization (conclusion) along with detailing the significance, implications and potential next steps.

Remember, at the heart of the discussion section is presenting an interpretation of your major findings.

Tips to Write the Discussion Section

- Highlight the significance of your findings

- Mention how the study will fill a gap in knowledge.

- Indicate the implication of your research.

- Avoid generalizing, misinterpreting your results, drawing a conclusion with no supportive findings from your results.

Aggarwal, R., & Sahni, P. (2018). The Results Section. In Reporting and Publishing Research in the Biomedical Sciences (pp. 21-38): Springer.

Bahadoran, Z., Mirmiran, P., Zadeh-Vakili, A., Hosseinpanah, F., & Ghasemi, A. (2019). The principles of biomedical scientific writing: Results. International journal of endocrinology and metabolism, 17(2).

Bordage, G. (2001). Reasons reviewers reject and accept manuscripts: the strengths and weaknesses in medical education reports. Academic medicine, 76(9), 889-896.

Cals, J. W., & Kotz, D. (2013). Effective writing and publishing scientific papers, part VI: discussion. Journal of clinical epidemiology, 66(10), 1064.

Docherty, M., & Smith, R. (1999). The case for structuring the discussion of scientific papers: Much the same as that for structuring abstracts. In: British Medical Journal Publishing Group.

Faber, J. (2017). Writing scientific manuscripts: most common mistakes. Dental press journal of orthodontics, 22(5), 113-117.

Fletcher, R. H., & Fletcher, S. W. (2018). The discussion section. In Reporting and Publishing Research in the Biomedical Sciences (pp. 39-48): Springer.

Ghasemi, A., Bahadoran, Z., Mirmiran, P., Hosseinpanah, F., Shiva, N., & Zadeh-Vakili, A. (2019). The Principles of Biomedical Scientific Writing: Discussion. International journal of endocrinology and metabolism, 17(3).

Hofmann, A. H. (2013). Writing in the biological sciences: a comprehensive resource for scientific communication . New York: Oxford University Press.

Kotz, D., & Cals, J. W. (2013). Effective writing and publishing scientific papers, part V: results. Journal of clinical epidemiology, 66(9), 945.

Mack, C. (2014). How to Write a Good Scientific Paper: Structure and Organization. Journal of Micro/ Nanolithography, MEMS, and MOEMS, 13. doi:10.1117/1.JMM.13.4.040101

Moore, A. (2016). What's in a Discussion section? Exploiting 2‐dimensionality in the online world…. Bioessays, 38(12), 1185-1185.

Peat, J., Elliott, E., Baur, L., & Keena, V. (2013). Scientific writing: easy when you know how: John Wiley & Sons.

Sandercock, P. M. L. (2012). How to write and publish a scientific article. Canadian Society of Forensic Science Journal, 45(1), 1-5.

Teo, E. K. (2016). Effective Medical Writing: The Write Way to Get Published. Singapore Medical Journal, 57(9), 523-523. doi:10.11622/smedj.2016156

Van Way III, C. W. (2007). Writing a scientific paper. Nutrition in Clinical Practice, 22(6), 636-640.

Vieira, R. F., Lima, R. C. d., & Mizubuti, E. S. G. (2019). How to write the discussion section of a scientific article. Acta Scientiarum. Agronomy, 41.

Related Articles

A quality research paper has both the qualities of in-depth research and good writing (Bordage, 200...

How to Survive and Complete a Thesis or a Dissertation

Writing a thesis or a dissertation can be a challenging process for many graduate students. There ar...

12 Ways to Dramatically Improve your Research Manuscript Title and Abstract

The first thing a person doing literary research will see is a research publication title. After tha...

15 Laboratory Notebook Tips to Help with your Research Manuscript

Your lab notebook is a foundation to your research manuscript. It serves almost as a rudimentary dra...

Join our list to receive promos and articles.

- Competent Cells

- Lab Startup

- Z')" data-type="collection" title="Products A->Z" target="_self" href="/collection/products-a-to-z">Products A->Z

- GoldBio Resources

- GoldBio Sales Team

- GoldBio Distributors

- Duchefa Direct

- Sign up for Promos

- Terms & Conditions

- ISO Certification

- Agarose Resins

- Antibiotics & Selection

- Biochemical Reagents

- Bioluminescence

- Buffers & Reagents

- Cell Culture

- Cloning & Induction

- Competent Cells and Transformation

- Detergents & Membrane Agents

- DNA Amplification

- Enzymes, Inhibitors & Substrates

- Growth Factors and Cytokines

- Lab Tools & Accessories

- Plant Research and Reagents

- Protein Research & Analysis

- Protein Expression & Purification

- Reducing Agents

- Affiliate Program

- UNITED STATES

- 台灣 (TAIWAN)

- TÜRKIYE (TURKEY)

- Academic Editing Services

- - Research Paper

- - Journal Manuscript

- - Dissertation

- - College & University Assignments

- Admissions Editing Services

- - Application Essay

- - Personal Statement

- - Recommendation Letter

- - Cover Letter

- - CV/Resume

- Business Editing Services

- - Business Documents

- - Report & Brochure

- - Website & Blog

- Writer Editing Services

- - Script & Screenplay

- Our Editors

- Client Reviews

- Editing & Proofreading Prices

- Wordvice Points

- Partner Discount

- Plagiarism Checker

- APA Citation Generator

- MLA Citation Generator

- Chicago Citation Generator

- Vancouver Citation Generator

- - APA Style

- - MLA Style

- - Chicago Style

- - Vancouver Style

- Writing & Editing Guide

- Academic Resources

- Admissions Resources

How to Write the Results/Findings Section in Research

What is the research paper Results section and what does it do?

The Results section of a scientific research paper represents the core findings of a study derived from the methods applied to gather and analyze information. It presents these findings in a logical sequence without bias or interpretation from the author, setting up the reader for later interpretation and evaluation in the Discussion section. A major purpose of the Results section is to break down the data into sentences that show its significance to the research question(s).

The Results section appears third in the section sequence in most scientific papers. It follows the presentation of the Methods and Materials and is presented before the Discussion section —although the Results and Discussion are presented together in many journals. This section answers the basic question “What did you find in your research?”

What is included in the Results section?

The Results section should include the findings of your study and ONLY the findings of your study. The findings include:

- Data presented in tables, charts, graphs, and other figures (may be placed into the text or on separate pages at the end of the manuscript)

- A contextual analysis of this data explaining its meaning in sentence form

- All data that corresponds to the central research question(s)

- All secondary findings (secondary outcomes, subgroup analyses, etc.)

If the scope of the study is broad, or if you studied a variety of variables, or if the methodology used yields a wide range of different results, the author should present only those results that are most relevant to the research question stated in the Introduction section .

As a general rule, any information that does not present the direct findings or outcome of the study should be left out of this section. Unless the journal requests that authors combine the Results and Discussion sections, explanations and interpretations should be omitted from the Results.

How are the results organized?

The best way to organize your Results section is “logically.” One logical and clear method of organizing research results is to provide them alongside the research questions—within each research question, present the type of data that addresses that research question.

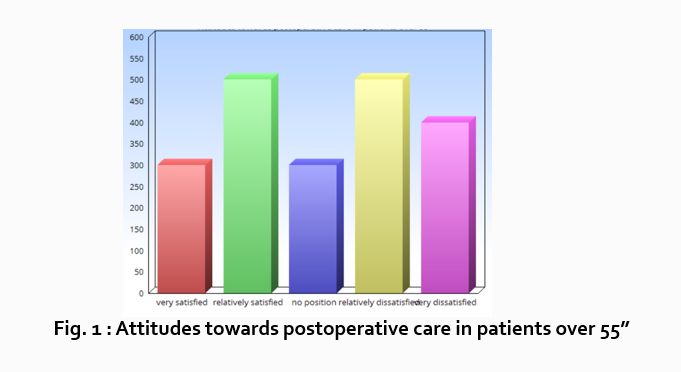

Let’s look at an example. Your research question is based on a survey among patients who were treated at a hospital and received postoperative care. Let’s say your first research question is:

“What do hospital patients over age 55 think about postoperative care?”

This can actually be represented as a heading within your Results section, though it might be presented as a statement rather than a question:

Attitudes towards postoperative care in patients over the age of 55

Now present the results that address this specific research question first. In this case, perhaps a table illustrating data from a survey. Likert items can be included in this example. Tables can also present standard deviations, probabilities, correlation matrices, etc.

Following this, present a content analysis, in words, of one end of the spectrum of the survey or data table. In our example case, start with the POSITIVE survey responses regarding postoperative care, using descriptive phrases. For example:

“Sixty-five percent of patients over 55 responded positively to the question “ Are you satisfied with your hospital’s postoperative care ?” (Fig. 2)

Include other results such as subcategory analyses. The amount of textual description used will depend on how much interpretation of tables and figures is necessary and how many examples the reader needs in order to understand the significance of your research findings.

Next, present a content analysis of another part of the spectrum of the same research question, perhaps the NEGATIVE or NEUTRAL responses to the survey. For instance:

“As Figure 1 shows, 15 out of 60 patients in Group A responded negatively to Question 2.”

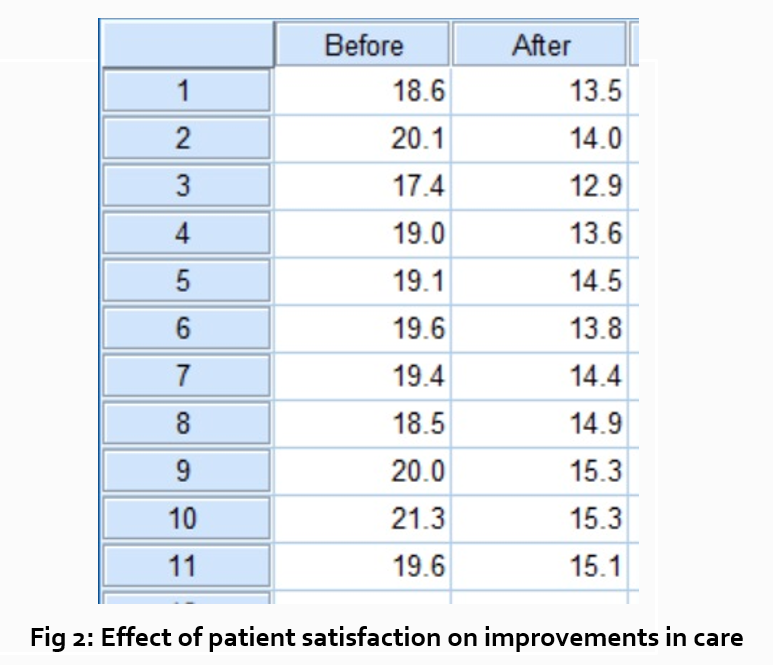

After you have assessed the data in one figure and explained it sufficiently, move on to your next research question. For example:

“How does patient satisfaction correspond to in-hospital improvements made to postoperative care?”

This kind of data may be presented through a figure or set of figures (for instance, a paired T-test table).

Explain the data you present, here in a table, with a concise content analysis:

“The p-value for the comparison between the before and after groups of patients was .03% (Fig. 2), indicating that the greater the dissatisfaction among patients, the more frequent the improvements that were made to postoperative care.”

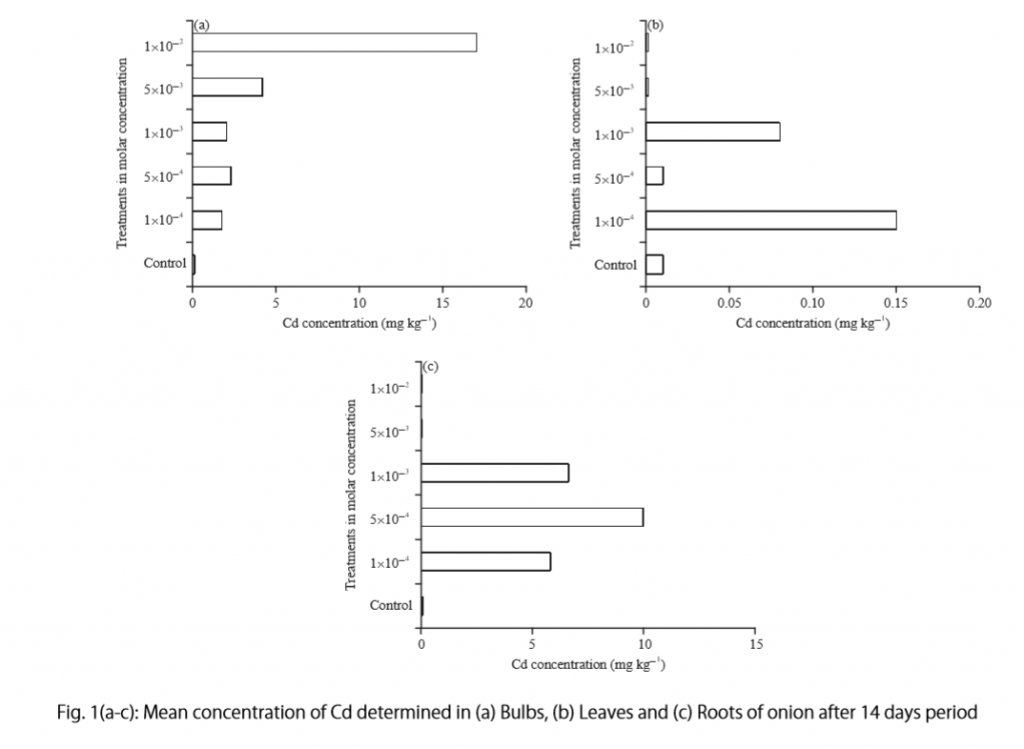

Let’s examine another example of a Results section from a study on plant tolerance to heavy metal stress . In the Introduction section, the aims of the study are presented as “determining the physiological and morphological responses of Allium cepa L. towards increased cadmium toxicity” and “evaluating its potential to accumulate the metal and its associated environmental consequences.” The Results section presents data showing how these aims are achieved in tables alongside a content analysis, beginning with an overview of the findings:

“Cadmium caused inhibition of root and leave elongation, with increasing effects at higher exposure doses (Fig. 1a-c).”

The figure containing this data is cited in parentheses. Note that this author has combined three graphs into one single figure. Separating the data into separate graphs focusing on specific aspects makes it easier for the reader to assess the findings, and consolidating this information into one figure saves space and makes it easy to locate the most relevant results.

Following this overall summary, the relevant data in the tables is broken down into greater detail in text form in the Results section.

- “Results on the bio-accumulation of cadmium were found to be the highest (17.5 mg kgG1) in the bulb, when the concentration of cadmium in the solution was 1×10G2 M and lowest (0.11 mg kgG1) in the leaves when the concentration was 1×10G3 M.”

Captioning and Referencing Tables and Figures

Tables and figures are central components of your Results section and you need to carefully think about the most effective way to use graphs and tables to present your findings . Therefore, it is crucial to know how to write strong figure captions and to refer to them within the text of the Results section.

The most important advice one can give here as well as throughout the paper is to check the requirements and standards of the journal to which you are submitting your work. Every journal has its own design and layout standards, which you can find in the author instructions on the target journal’s website. Perusing a journal’s published articles will also give you an idea of the proper number, size, and complexity of your figures.

Regardless of which format you use, the figures should be placed in the order they are referenced in the Results section and be as clear and easy to understand as possible. If there are multiple variables being considered (within one or more research questions), it can be a good idea to split these up into separate figures. Subsequently, these can be referenced and analyzed under separate headings and paragraphs in the text.

To create a caption, consider the research question being asked and change it into a phrase. For instance, if one question is “Which color did participants choose?”, the caption might be “Color choice by participant group.” Or in our last research paper example, where the question was “What is the concentration of cadmium in different parts of the onion after 14 days?” the caption reads:

“Fig. 1(a-c): Mean concentration of Cd determined in (a) bulbs, (b) leaves, and (c) roots of onions after a 14-day period.”